Are Large Language Models for Education Reliable for All Languages?

BEA Workshop @ACL

BEA Workshop @ACL

Abstract

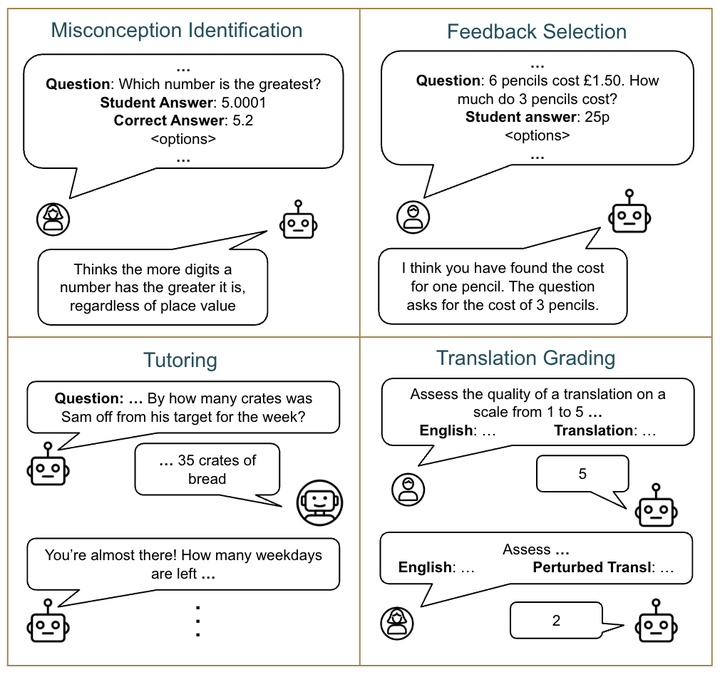

Large language models (LLMs) are increasingly being adopted in educational settings. These applications expand beyond English, though current LLMs remain primarily English-centric. In this work, we ascertain if their use in education settings in non-English languages is warranted. We evaluated the performance of popular LLMs on four educational tasks: identifying student misconceptions, providing targeted feedback, interactive tutoring, and grading translations in eight languages (Mandarin, Hindi, Arabic, German, Farsi, Telugu, Ukrainian, Czech) in addition to English. We find that the performance on these tasks somewhat corresponds to the amount of language represented in training data, with lower-resource languages having poorer task performance. However, at least some models are able to more or less maintain their levels of performance across all languages. Thus, we recommend that practitioners first verify that the LLM works well in the target language for their educational task before deployment.